Jai Aggarwal, March 11, 2026

Intro

From ChatGPT to Sora, AI tools have increasingly become a part of daily life. The growth in AI usage stems from two main improvements over the last decade: performance and scalability. To achieve the kind of performance and scale of ChatGPT and Sora, companies need a lot of processing power – the kind you can’t get from simply keeping an AI model in a typical building. Instead, companies rely on AI data centers: specialized buildings designed for the sole purpose of hosting machine learning models. In this tech brief, we will look under the hood of the infrastructure that enables AI, explaining what data centers are, why they are necessary, and how – despite the claims of Big Tech – they seem to cause more problems than they solve (Kneese & Woluchem, 2025).

What are data centers?

Processing power for AI applications comes from Graphics Processing Units (GPUs). GPUs are specialized hardware that enable parallel processing – the simultaneous processing of multiple inputs at once – allowing AI models to perform extremely quickly. The sheer size and scale of AI usage means that a single GPU isn’t enough; hundreds of thousands of GPUs are required to meet modern demands for AI.

AI data centers are specialized facilities designed to store hordes of GPUs. The unique needs of AI data centers necessitate having a distinct infrastructure compared to traditional data centers.

Electricity

First, AI data centers require an immense amount of electricity. Compared to the more common Central Processing Unit (CPU), GPUs can require two to four times as much electricity (Pew Research Center, 2025). While GPUs are more efficient than CPUs, the raw number of GPUs in a single location means that AI data centers can consume between 10-25 mWh of electricity a year (IEA, 2025), or as many as 10,000 to 25,000 individual households. Particularly large facilities, called hyperscalers, can be the equivalent of up to 100,000 households (IEA, 2025).

Cooling

A second major demand of AI data centers is cooling. Running high-performance computing infrastructure around the clock generates a large amount of heat, which may lead to equipment failure or critical hardware damage if left unchecked. AI data centers are equipped with liquid cooling systems to combat overheating, well beyond the infrastructure required for traditional data centers. As with powering the hardware, cooling infrastructure also requires access to electricity; between 7 and 30 percent of electricity usage of AI data centers is allocated specifically towards cooling (Pew Research Center, 2025).

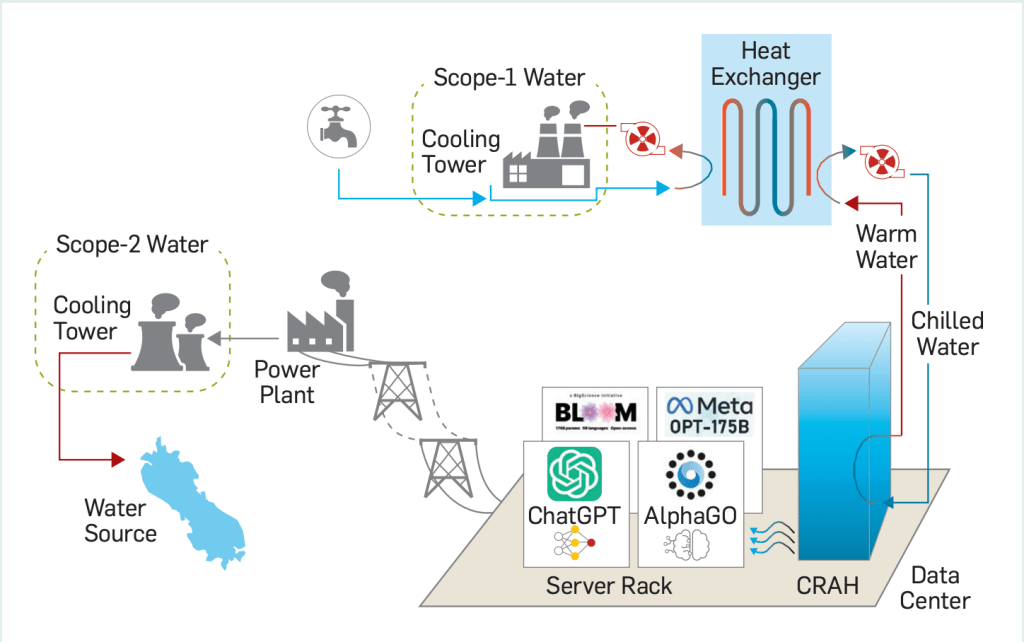

These cooling systems also demand constant access to immense amounts of fresh water. The image below (taken from Li et al., 2025) showcases two of the main ways that AI data centers utilize water. Scope-1 (at the top) illustrates how local water resources are used for on-site liquid cooling: Chilled water passes through server racks, absorbs heat from hardware, and is re-cooled using a heat exchanger (which itself draws cold water from a cooling tower). Scope-2 (lower-left) visualizes off-site water demands, whereby water is supplied to the power plants that supply their massive electricity needs.

Accurate records of water usage are difficult to gather, as companies rarely disclose their true usage data (CBC, 2025). Recent reports of Microsoft-internal forecasts have suggested that water usage for about 100 of its data centers will rise from 7.9 billion litres in 2020 to between 18 to 28 billion litres in 2030, an increase of between 120 to 250 percent (New York Times, 2026).

Downstream Effects of AI Data Centers

The intense resource usage of AI data centers has major financial and ecological consequences, particularly for those living in their vicinity; we highlight a few here, though see the following sources for more comprehensive reviews (Sharma, 2024; Kneese & Woluchem, 2025; Nguyen & Green, 2025).

A major financial cost already impacting communities around the globe is the spike in their monthly utility bill. An increase in electricity and fresh water demand is often served with supply from local grids, straining the resources available for the general public. For example, a recent Bloomberg analysis has found that American residents living near data centers have seen monthly electricity costs increase by up to 267% between 2020-2025 (Bloomberg, 2025).

From an ecological perspective, the absence of reliable renewable energy sources means that data centers will be powered by non-renewable sources, having important downstream impacts on carbon emissions (Nguyen & Green, 2025). Recent estimates suggest that 82% of global energy demands for data centers are supplied by fossil fuels and fracked gas (Kneese & Woluchem, 2025). In a particularly grievous (and hopefully anomalous) case, xAI installed gas turbines to help power their Memphis data center, despite lacking the appropriate permits (NAACP, 2025). They now face legal action from the NAACP, as the concentrated increase in emissions poses health risks to local residents (NAACP, 2025).

Summary

While AI data centers are required to fill the infrastructural needs of the modern AI boom, they also raise a number of important questions about how to do so in a sustainable way. Existing deployments strain local resource grids and contribute to large-scale emissions, despite a lack of clarity in how financially and socially profitable AI stands to be in the near future. To help address concerns and demands from technologists, legislators, and local residents, increased transparency from AI companies is crucial.